Big data is so large and complex that traditional applications are not enough; therefore, better applications are needed to manipulate it. Big data is a data which grows and becomes available exponentially; the data is both structured and unstructured. Big data might be as important to business as a society. More data may lead to more accurate analysis — it can challenge wrong-headed intuitive beliefs with concrete proof (for example these surprising words that get shared in content on social media sites)

Companies like Uber, Netflix, Pivotal Software Inc. and Affectiva use and manipulate big data (applications and frameworks like Hadoop, R, and Pivotal Big Data Suite) to accomplish their ends.

The definition of Big Data consists of five parts including the following: Volume, Velocity, Variety, Variability, and Complexity. The first four are called 4 V’s of big data.

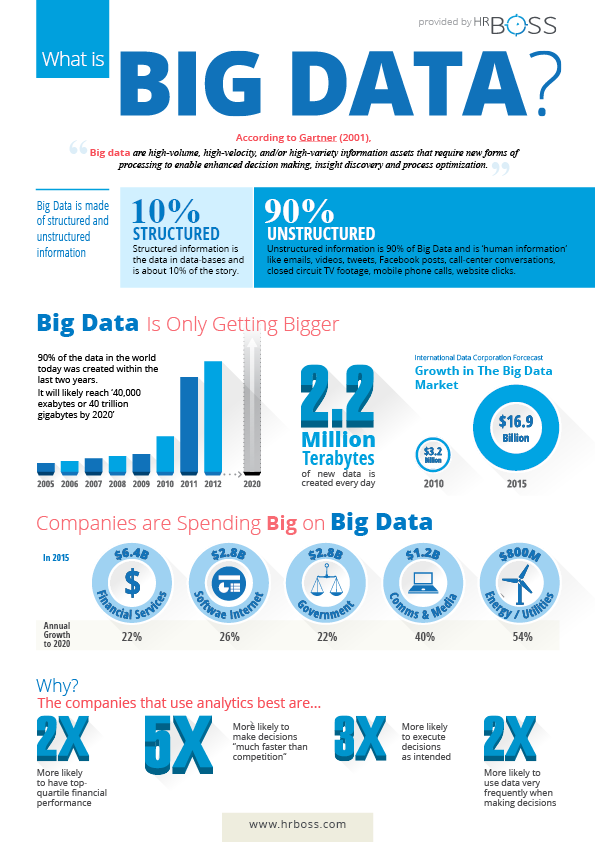

Source: HRBoss.com

Volume:

The term “volume” as used in the context of big data means the quantity of data that is generated. Many factors make data volume increase. Data based on transactions is stored through the years. Unstructured data is streamed in from social media. The amount of sensor and machine-to-machine data that is being collected continually increases. Excessive data volume was a problem in the past, but as computing power increases we are increasingly able to process it in meaningful ways.

The size of the data determines the following three things: value, the potential of the data being considered, and whether it is big data or not.

Velocity:

The term “velocity” as used in the context of big data means the speed of generation of data or how fast the data is generated and processed. Data is streaming in at a speed which is unprecedented. Data must also be dealt with in a well-timed manner. Most organizations face a challenge of reacting quickly enough to deal with the data velocity. One example is Google. When they make their algorithm changes, they have to ensure that they have the processing power to search their data fast enough for consumers.

Variety:

Data comes in all types of formats. Structured numeric data comes in traditional databases. Unstructured data formats including the following: Documents, email, video, audio, stock ticker data and financial transactions. Many organizations still cope with managing, merging, and governing different formats of data.

Variability:

Data is not consistent, sometimes searches or general interest in certain topics, say social media or other types of data experienced peaks and valleys. Data analysis techniques allow the data scientist to mine this type of unstable data and still draw meaningful conclusions from it.

Complexity:

If a large volume of data comes from multiple sources, then data management can become a very complex process. The data has to be linked, connected, and correlated so that the information is able to be comprehended — so that the analysis of the data doesn’t become unmanageable.

Sources of Big Data:

This advancement in the processes of analyzing big data has only come as a result of increasing possibility to store and manipulate it that have arisen in the last few years. Sources of Big Data that are collected can be separated into six broad categories: Archives, Transactional Data, Enterprise Data, Social Media, Public Data, and Activity Generated.

- Archives:

These days, organizations just don’t want to throw away data. Even when the data is no longer required for any foreseeable reason, storage is just getting so affordable that many companies choose to archive everything they can — for example, scanned documents, scanned copies of agreements, records of ex-employees/completed projects, banking transactions older than the compliance regulations. They are even going as far as collecting as many data as they can along the way. This type of data is called Archive Data.

- Transactional Data:

Most of the enterprises perform some sort of transactions, whether they arise from web or mobile apps, CRM systems, or many others. Supporting the transactions from these applications, there are usually one or more relational databases as a backend infrastructure. This type of data is mostly structured and is referred to as Transactional Data.

- Enterprise Data:

Enterprises store large volumes of data in various formats. Common ones include word documents, spreadsheets, presentations,PDF files, XML documents, emails, flat files, and legacy formats. Data like this which is spread across an organization is called Enterprise Data.

- Social Media:

We all know what this is. Countless interactions via networks like Twitter, Facebook, and Pinterest accrue massive amounts of minable data. These social networks usually involve mostly unstructured data consisting of images, text, audio, videos, and more. Data from these types of sources is called Social Media data (and proves especially useful for Social Media Marketing).

- Public Data:

This subsection of data includes data that is publicly available such as that published by governments, data published by research institutes, census data, Wikipedia, sample open source data feeds, data from weather and meteorological departments, and other data which are freely available to the public. This type of publicly accessible data is called Public Data.

- Activity Generated:

Machines create data which far surpasses the data volume created by humans. Data from devices such as surveillance videos, satellites, industrial machinery, cell phone towers, sensors, and other data created by machines is called Activity Generated data.

Two Data Analysis Technologies:

Hadoop:

One of the top benefits that organizations use Hadoop is that it provides massive storage of data of any format, and enormous processing power of data of any format. It also has the ability to handle virtually, limitless simultaneous tasks or jobs. If data volume and varieties keep increasing from the internet, then it is a key consideration.

In 2008, Yahoo released Hadoop as an open-sourced project. Today, Apache Software Foundation (ASF), which is a global community of software developers and contributors, manages and maintains Hadoop’s application. The distributed computing and processing portion of Nutch became Hadoop.

Pivotal:

Pivotal has combined some open-source technologies into a platform called Pivotal open source Suite. Pivotal Big Data Suite allows companies to modernize their data infrastructure, discover more insights using advanced analytics, and build analytic applications at scale. Pivotal Suite is ready in the cloud, agile, and open.

A Case Study: Netflix:

Netflix delivers amazing personalization to customers. Netflix can easily aggregate data about customers, trends, viewing habits, and more by using what movies they rate or watch. Netflix can attempt to respond to questions that most organizations cannot or will not even ask. With respect to covers and colors, these questions include the following:

- Which colors on covers for titles are most interesting to customers in general?

- Are customers trending at a given time towards certain towards specific types of covers? If yes, must personalized recommendations change automatically?

- Should different colors on covers be used for different customers? And many others…

Please contact us today or call us now at (858) 652-8920 to know more about big data.