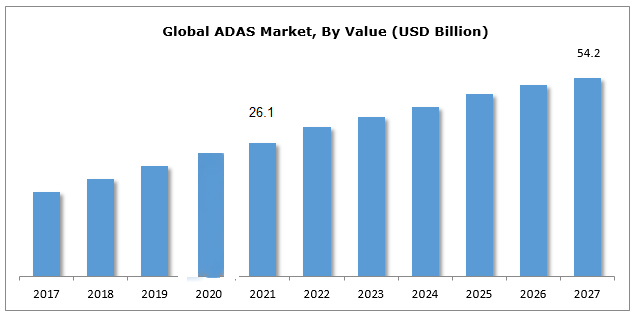

According to Verified Market Research, the Advanced Driver Assistance Systems (ADAS) Market size was valued at USD 25.92 Billion in 2021 and is projected to reach USD 55.66 Billion by 2030, growing at a CAGR of 10.8% from 2022 to 2030.

Advanced driver-assistance systems (ADAS) are technological solutions that are designed to reduce vehicle accidents and fatalities. When properly designed, these systems use a human to machine interface to improve the driver’s ability to react to dangers on the road and increase the safety of driving a vehicle. Through early warning and automated systems, ADAS improves safety and reaction times to potential threats.

Some of these systems are built standard to certain vehicles, while others can be added later as aftermarket features and even entire systems to personalize the vehicle to the driver’s preferences. These systems can be used to provide critical information about traffic, upcoming road closures and blockades, congestion levels, suggested routes to avoid congestion, etc.

These systems can also be used to detect human driver fatigue and distraction and issue cautionary alerts, as well as to assess driving performance and make recommendations. In addition, ADAS can take over control from humans after assessing a threat, performing simple tasks (such as cruise control) or complex maneuvers (like overtaking and parking).

But the most significant benefit of utilizing assistance systems is that they allow for communication between various vehicles (V2V), vehicle-to-infrastructure systems (V2I or V2X), and transportation management centers. This helps in the exchange of data to improve vehicle vision, localization, planning, and decision-making.

Key Statistics:

- The global ADAS market size was US$ 26.1 billion in 2021

- 92.7% of new vehicles in the U.S. have at least one ADAS

- Automakers representing 99% of the U.S. new car market have committed to making AEB the first standard ADAS on all light-duty vehicles by September 1st, 2022

- Driver assistance technology could prevent 1.6 million crashes and 7,200 fatal crashes per year

- 84% of drivers believe ADAS features promote safe driving

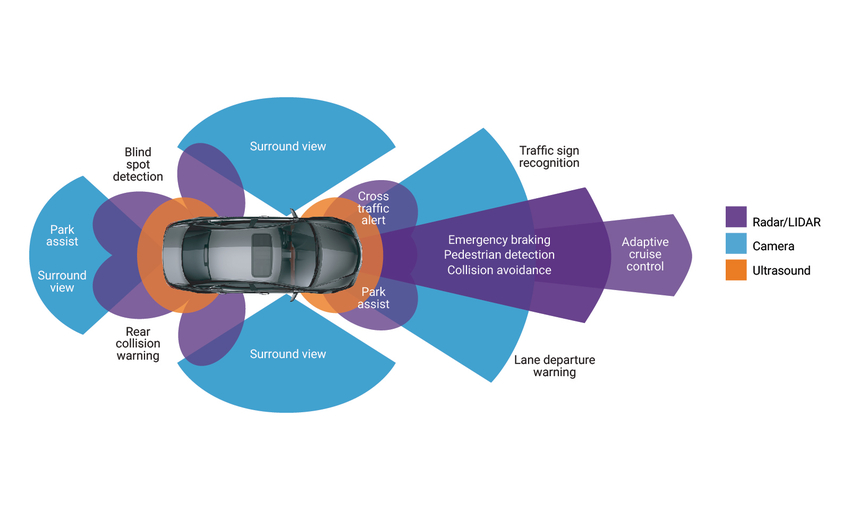

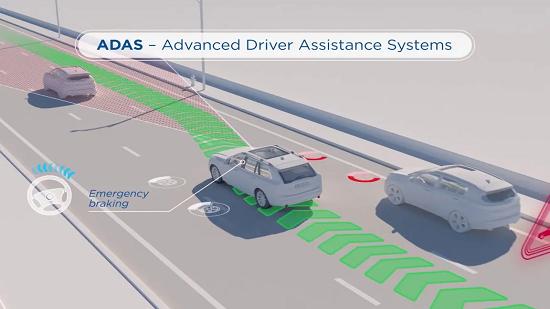

Advanced driver-assistance systems (ADAS) work by warning the driver of danger or even intervening to prevent an accident. ADAS-equipped vehicles can sense their surroundings, process the information in a computer system quickly and accurately, and provide the correct output to the driver.

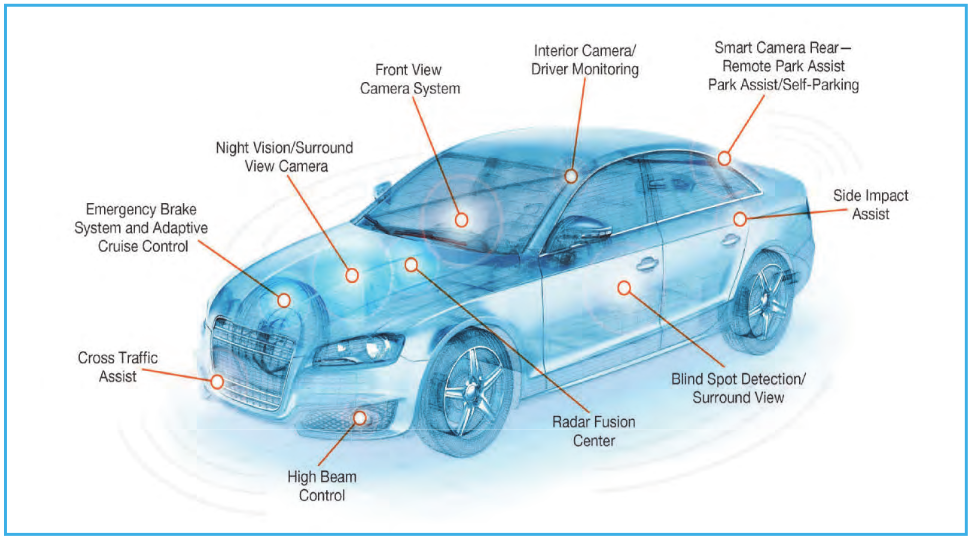

ADAS is built into the original design of most late-model vehicles, and it is upgraded as automobile manufacturers introduce new vehicle models and features. To enable safety features for cars and other vehicles, these systems use multiple data inputs. Some of these data sources include:

- Automotive Imaging – A series of high-quality sensor systems that mimic and outmatch the human eye capabilities in terms of 360-degree coverage, 3D object resolution, high visibility in challenging weather and lighting conditions, and real-time data.

- LiDAR – What is light detection and ranging? It adds more cameras and sensors for computer vision, which converts outputs into 3D and can distinguish between static and moving objects for additional layers of blind-spot or bad-lighting situations.

- SONAR – Sound Navigation and Ranging sensors are capable of detecting objects that are close to the vehicle. SONAR or ultrasound sensors are used in backup detection and self-parking sensors in cars, trucks, and buses.

- RADAR – In ADAS-equipped vehicles, RADAR (Radio Detection and Ranging) sensors are used to detect large objects or other vehicles in front of the vehicle. Additionally, RADAR is used to get through bad weather and other visibility occlusions.

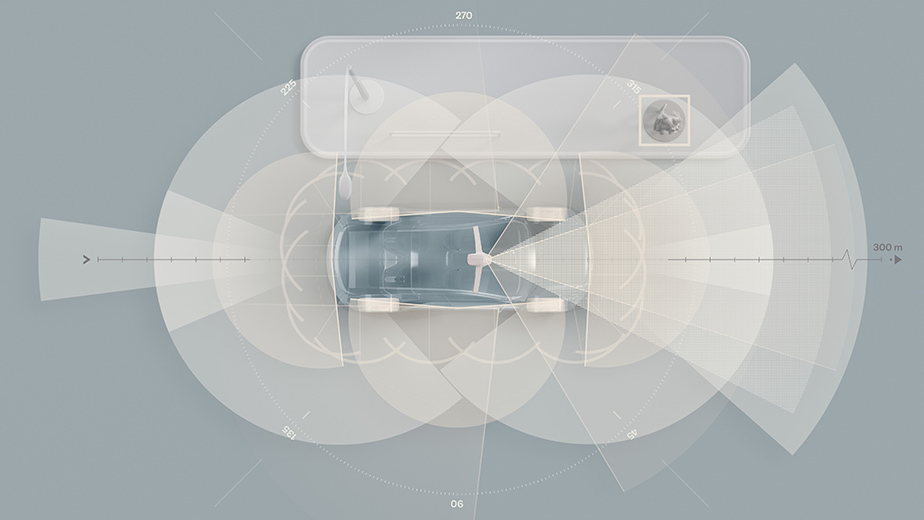

The architecture of advanced driver-assistance systems (ADAS) consists of a set of sensors, interfaces, and a powerful computer processor that integrates all of the data and makes real-time decisions. These sensors are constantly scanning the environment around the vehicle, sending data to the onboard ADAS computers for prioritization and action.

1. Passive ADAS

In passive advanced driver-assistance systems (ADAS), the computer simply informs the driver of an unsafe condition, regardless of the number or types of sensors installed. The driver must take action to avoid that condition from resulting in an accident.

Sounds, flashing lights, and sometimes even physical feedback, such as a steering wheel that shakes to warn the driver that the lane they are moving into is occupied by another vehicle, are common warning methods (blind spot detection). The most common Passive ADAS functions include:

- Anti-lock Braking Systems (ABS) – Anti-lock brakes prevent the car from skidding and turning during emergency braking

- ESC (Electronic Stability Control): It assists the driver in avoiding under- or over-steering, especially when driving in unusual conditions

- (TCS) Traction Control System: What is a traction control system? It assists the driver in maintaining adequate traction when negotiating turns and curves by incorporating aspects of both ABS and ESC

- Back-up Camera – When parking or backing up, a backup camera gives the driver a view behind the vehicle

- Lane Departure Warning (LDW) – It notifies the driver if the vehicle is drifting out of its lane.

- Forward Collision Warning (FCW) – What is forward collision warning? It instructs the driver to brake in order to avoid a collision.

- Blind Spot Detection – A vehicle or car with blind spot detection warns the driver that a vehicle is approaching from behind.

- Parking Assistance: It warns the driver when their front or rear bumpers are approaching an object at low speeds into a parking space.

2. Active ADAS

Active advanced driver-assistance systems (ADAS), in addition to passive safety systems, play a significant role in reducing traffic fatalities and the financial impact of vehicle accidents. Developing an ADAS system requires cutting-edge yet cost-effective RF technology that can be embedded in the vehicle to detect and classify exterior objects. The vehicle takes direct action in Active ADAS. The following are some examples of Active ADAS functions:

- Automatic Emergency Braking Systems – it detects whether the driver is about to collide with another vehicle or other road objects using sensors. This application can detect nearby traffic and warn the driver if there is a danger. To avoid a collision, some emergency braking systems can take preventive safety measures like tightening seat belts, reducing speed, and adaptive steering

- Adaptive Cruise Control – What’s adaptive cruise control? ACC is useful on the highway, where it can be difficult for drivers to keep track of their speed and other vehicles over long periods of time. Depending on the actions of other objects in the immediate area, advanced cruise control can automatically accelerate, slow down, and even stop the vehicle.

- Self Parking – Drivers can be alerted to blind spots with self-parking, allowing them to know when to turn the steering wheel and stop. Vehicles equipped with rear-view cameras provide a better view of the surroundings. By combining the input of multiple sensors, some systems can even complete parking without the driver’s assistance.

Traffic Jam Assist – It combines adaptive cruise control and Lane Keeping Assist to provide semi-automated driver assistance during congested traffic events, such as lane closures, road construction, etc.

Emergency Steering Assistance – In a situation where an evasive steering maneuver is initiated, the ESA assists the driver. During the maneuver, it provides additional steering torque and aids the driver in lateral vehicle guidance.

5G or V2X – The 5G ADAS feature provides V2X (vehicle-to-everything) communication between the vehicle and other vehicles or pedestrians, with increased reliability and lower latency. For real-time navigation, millions of vehicles now connect to cellular networks. This application will improve situational awareness, control or suggest speed adjustments to account for traffic congestion, and update GPS maps with real-time updates using existing methods and the cellular network.

Human error is to blame for the vast majority of traffic accidents. These advanced safety systems were created to automate and improve aspects of the driving experience in order to improve driver safety and habits. Advanced driver-assistance systems (ADAS) reduce the number of traffic fatalities by lowering the risk of human error.

In the automation industry, these technologies can be divided into two groups: those that automate driving, such as automatic emergency braking systems, and those that assist drivers in improving their awareness, such as lane departure warning systems.

The goal of these safety systems is to improve road safety by lowering the overall number of traffic accidents and thus reducing vehicular injuries. They also reduce the number of insurance claims resulting from minor collisions that cause property damage but no injuries.

ADAS advantages include:

- Automated safety system adaptation and enhancement to improve driving among the general public. ADAS is designed to help drivers avoid collisions by using technology to warn them about potential hazards or take control of the vehicle to avoid them.

- Navigational warnings, such as automated lighting, adaptive cruise control, and pedestrian crash avoidance mitigation (PCAM), alert drivers to potential dangers such as vehicles in blind spots, lane departures, and more.

- Sensors have the potential to self-calibrate in the future to focus on the systems’ inherent safety and dependability.

Significant advances in automotive safety in the past (e.g., shatter-resistant glass, three-point seatbelts, airbags) were passive safety features aimed to reduce injuries during an accident. Today, ADAS systems actively increase safety by lowering the occurrence of accidents and injuries to occupants through the use of embedded vision.

The installation of cameras in the vehicle necessitates the development of a new AI function that employs sensor fusion to recognize and process objects. Sensor fusion combines enormous volumes of data using image recognition software, ultrasonic sensors, lidar, and radar, similar to how the human brain processes information. This technology can react physically faster than a human driver could. It can study streaming video in real-time, understand what it sees, and decide how to respond.

Some of the most common ADAS applications are:

Adaptive Cruise Control

Adaptive cruise control is especially useful on the highway, where drivers often struggle to keep track of their speed and other vehicles for extended periods of time. Depending on the action’s other objects in the immediate region, advanced cruise control can automatically accelerate, slow down, and sometimes stop the car.

Glare-Free High Beam and Pixel Light

Glare-free high beam and pixel light use sensors to adjust to the darkness and surroundings of the car without disturbing approaching motorists. This innovative headlight program detects other vehicles’ lights and redirects the vehicle’s lights away from other road users, preventing them from being briefly blinded.

Adaptive Light Control

Adaptive light control adjusts the headlights of a vehicle to the lighting conditions outside. It adjusts the brightness, direction, and rotation of the headlights based on the vehicle’s surroundings and the level of darkness.

Automatic Parking

Automatic parking alerts drivers to blind areas, allowing them to know when to turn the steering wheel and come to a complete stop. Rearview cameras provide a greater view of the surroundings than typical side mirrors. By merging the data of several sensors, some systems may even finish parking without the driver’s assistance.

Autonomous Valet Parking

Autonomous valet parking is a novel technology that manages autonomous vehicles in parking lots using vehicle sensor meshing, 5G network connection, and cloud services. Sensors educate the car on where it is, where it needs to go, and how to get there safely. All of this data is examined carefully and used to conduct drive acceleration, braking, and steering until the vehicle is safely parked.

Navigation System

Car navigation systems use on-screen directions and audio prompts to assist drivers in following a route while remaining focused on the road. Some navigation systems can display precise traffic information and, if necessary, suggest an alternate route to avoid traffic bottlenecks. To prevent driver attention, advanced systems may even include heads-up displays.

Blind Spot Monitoring

Blind spot detection systems use sensors to provide drivers with important information that is otherwise difficult or impossible to obtain. Some systems sound an alarm when they detect an object in the driver’s blind spot, such as when the driver tries to move into an occupied lane.

Automatic Emergency Braking

Automatic emergency braking employs sensors to assess whether the driver is in the process of colliding with another vehicle or other roadside items. This program can detect adjacent vehicles and advise the driver to potential hazards. To avert an accident, some emergency braking systems can perform preventive safety steps such as tightening seat belts, slowing down, and engaging adaptive steering.

Crosswind Stabilization

This relatively new ADAS feature assists the vehicle in dealing with high crosswinds. This system’s sensors may detect high pressures acting on the vehicle while driving and apply brakes to the wheels affected by crosswinds.

Driver Drowsiness Detection

Driver drowsiness detection notifies drivers when they are sleepy or distracted on the road. There are various ways to tell if a driver’s concentration is waning. In one scenario, sensors can assess the driver’s head movement and pulse rate to see if they indicate drowsiness. Other systems send out driver alerts comparable to lane detecting warning signals.

Driver Monitoring System

Another method of gauging the driver’s attentiveness is the driver monitoring system. The video sensors can detect whether the driver’s eyes are fixed on the road or wandering. Driver monitoring systems can provide warnings in the form of noises, vibrations in the steering wheel, or flashing lights. In some situations, the car will take the extreme measure of entirely stopping the vehicle.

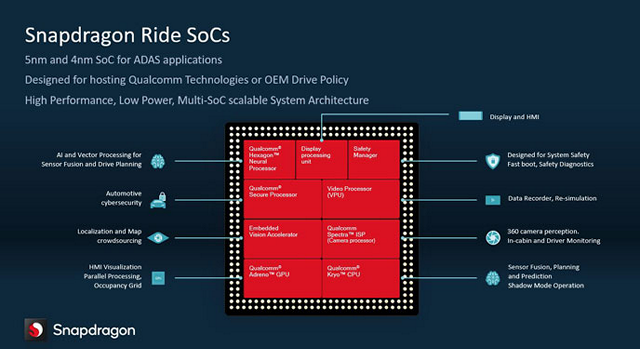

Qualcomm ADAS

Since its inception in January 2020 as an integral component of the Snapdragon Digital Chassis, the Snapdragon Ride Platform has gained traction with an increasing number of global automakers and Tier 1 suppliers. Qualcomm unveiled the Snapdragon Ride Vision System at CES 2022, a product portfolio expansion that includes an open, scalable, and modular computer vision software stack built on an industry-leading 4-nanometer (4nm) processing node.

The Arriver Vision stack is linked with the Vision System-on-Chip (SoC), enabling an optimal implementation of front and surround-view cameras for advanced driver assistance systems (ADAS) and automated driving (AD). The Snapdragon Ride Platform has grown to become one of the most scalable, capable, and adaptable ADAS/AD solutions available today when combined with the Arriver Drive Policy stack and an already extensive range of hardware and software options.

In a recent development, Arriver, BMW, and Qualcomm will be jointly developing a software stack designed for level 2 ADAS and level 3 Autonomous Driving (AD). The effort combines BMW’s level 2 AD stack, Arriver’s Vision Perception and NCAP (New Car Assessment Program) Drive Policy, and Qualcomm’s Snapdragon Ride platform. The joint development effort will combine decades of R&D by the three companies and more than 1,400 engineers located in China, the Czech Republic, Germany, Romania, Sweden, and the U.S.

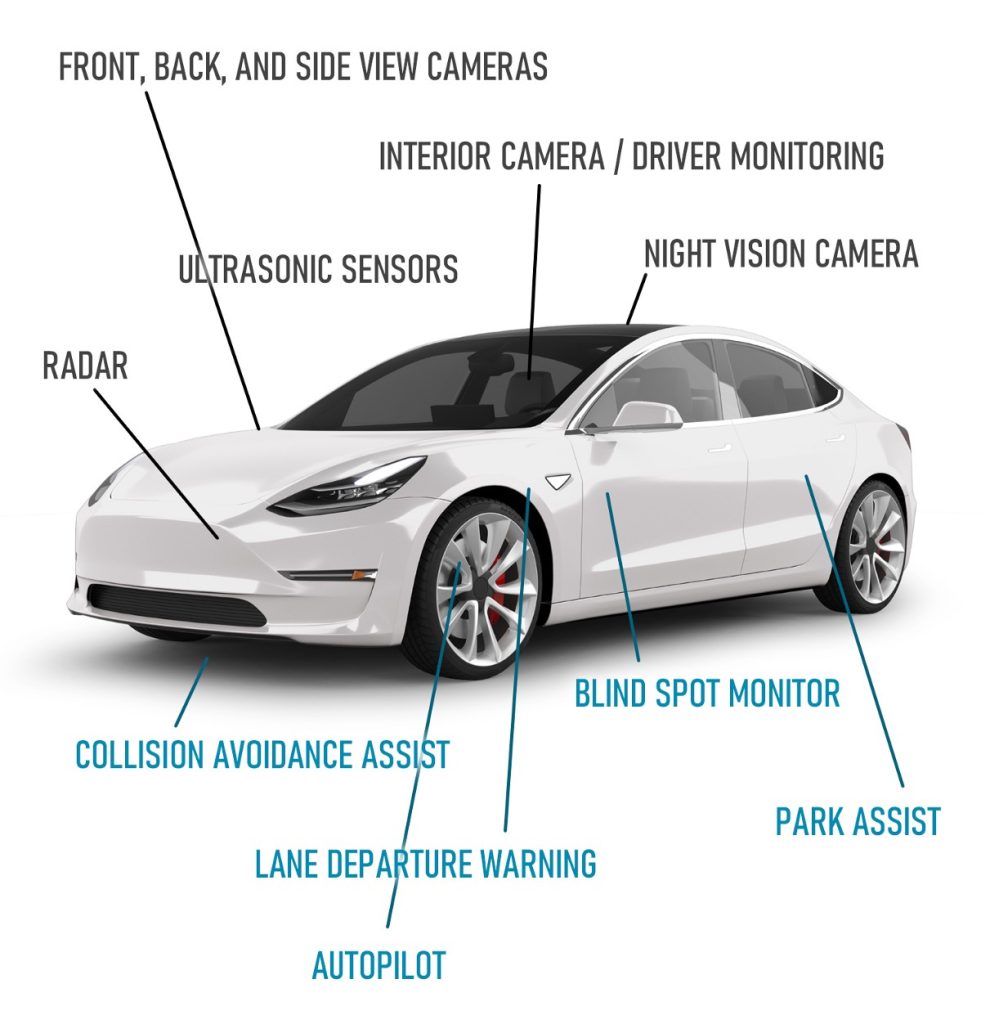

Tesla ADAS

Tesla has long been a leader in autopilot technology in automobiles, even naming its system ‘Autopilot.’ Not only is their system one of the most complex and accurate on the road, but it is also constantly updated over-the-air (much like your smartphone), so the vehicles continue to improve. The main disadvantage is that, unlike facial monitoring, driver monitoring solely analyzes steering wheel inputs to detect whether the driver is paying attention.

Tesla employs eight cameras around the vehicle to provide a complete 360-degree view, as well as a front-facing radar and long-range ultrasonic sensors. It makes use of a powerful machine learning computer (dubbed the Full Self-Driving Computer, or Hardware 3) that began shipping in early 2019.

Supported Models:

Autopilot and Full Self-Driving functions are available as an option on all current Tesla vehicles (the Tesla Model 3, Model Y, Model S, and Model X), AP2 and above. Older Tesla vehicles (pre-2016) with AP1 have an older version of Autopilot with less functions.

General Motors ADAS

General Motors is widely considered as one of the leading developers in self-driving technology, having introduced its highly rated Super Cruise technology on the Cadillac CT6 and working on Cruise, a branch of GM, to create completely self-driving fleet cars (like taxis). Super Cruise is also available in a more limited version on the new Chevy Bolt.

What It’s Called:

Super Cruise

Technology Used:

Intel’s Mobileye platform, Trimble RTX for location, forward-facing cameras, side cameras, radar, and an inside camera from FOVIO for eye tracking are all included in Super Cruise.

VW / Audi / Porsche ADAS

The Volkswagen Group owns various brands, including Volkswagen, Audi, and Porsche. Audi has recently made significant moves into self-driving, promoting a Level 3 system in Europe that, regrettably, is not currently available in the United States due to regulatory issues. So, for the time being, Audi’s autopilot functions are comparable to others.

Active Lane Assist with Stop & Go (for faster speeds) and Traffic Jam Assist (for lower speeds). “Traffic Jam Pilot” will be the name of the future Level 3 version, which will be hands-off in speeds under 37 MPH. It is currently available in various other countries but not in the United States. For foreign markets, there is also a technology named “Adaptive Drive Assist.”

Technology Used:

Audi has made significant moves into self-driving technology recently, including the first Lidar unit in a consumer vehicle, the A8 (and also the A6 and Q8), as well as its new zFAS controller, which merges sensor inputs into a single computing unit. For perceptual inputs, they also collaborate with Mobileye (EyeQ 4 chips).

BMW ADAS

BMW has long had adaptive cruise control with rudimentary lane centering, but new technology was introduced with some 2019 models. The system cannot be updated over the air and must be taken to a dealer.

What It’s Called:

Driving Assistant Pro with Extended Traffic Jam Assistant

Technology Used:

BMW uses the Mobileye EyeQ platform in the Driving Assistant package, along with ZF control software, and the EyeQ 4 chip with a tri-focal camera set looking forward on the latest models. It also features radar sensors in the front and back. It also has an eye monitoring camera as an option.

Kia / Hyundai ADAS

Hyundai Motor Group and Kia Motors are joint ventures that manufacture unique but comparable automobiles on shared platforms and parts. Hyundai invested in self-driving firm Aurora in 2019, but the technology has yet to be released to the public. Outside of advanced systems like Tesla and GM Supercruise, Kia and Hyundai currently provide Level 2 technology in certain of their vehicles, which is best-in-class.

What It’s Called:

Smart Cruise Control with Stop and Go and Lane Following Assist (LFA) (SCC)

Highway Drive Assist (HDA) combines LFA and SCC and adjusts to speed restrictions automatically.

Hyundai/Kia now uses an in-house technology dubbed HDA2 (Highway Driving Assist), but may leverage technologies from the Aurora investment in the future. They have also previously collaborated with Intel / Mobileye and will most likely use their EyeQ sensors.

Volvo ADAS

Volvo has long been a safety technology leader, and it was one of the first firms to introduce advanced safety systems and lane centering to its whole car lineup. They recently had some self-driving difficulties due to a decision to transfer platforms, delaying more complex autopilot-like functionality.

What It’s Called:

Pilot Assistance (the latest version is technically Pilot Assist II)

The 2022/2023 version will be known as “Ride Assist” (or “Highway Assist”).

Volvo now uses the Mobileye EyeQ 3 platform, as well as a front-facing camera and radar (Delphi’s RaCAM – Radar and Camera Sensor Fusion System, which is mounted on the windshield). They want to convert from Mobileye to the NVIDIA Orin chipset in the future with “Ride Assist” in 2022 or 2023, as well as combine front-facing Luminar LiDAR with the support of Zenseact on the software side.

Mercedes-Benz ADAS

Mercedes-Benz pioneered adaptive cruise control with its high-end S-class sedan in the late 1990s. As a luxury car manufacturer, Mercedes has always made sure that its vehicles have the most up-to-date technology, but it has recently fallen behind in terms of autopilot functions.

What It’s Called:

Driver Assistance Package PLUS contains features such as DISTRONIC Active Distance Assist, Active Steering Assist, Active Lane Keeping Assist, and Active Lane Change Assist.

Mercedes collaborates with Bosch and NVIDIA to power its systems using a combination of camera and radar inputs.

Although advanced driver-assistance systems (ADAS) aid us in driving more safely, we must remember that even the best system is always cautious and in adherence to the rules of the road. ADAS has limitations, some of which are related to weather or road conditions etc. Other disadvantages of ADAS include:

- American insurers do not provide driver discounts for vehicles equipped with ADAS. This is due to a lack of solid data from automakers demonstrating increased road safety, though some insurance companies have recognized ADAS’s significant potential to reduce the number of driving-related accidents.

- There are various options, as well as training and implementation challenges. While the technology is available on the market, many drivers are overwhelmed by the options and are unsure which would be the best fit for them. Furthermore, even after such systems have been installed and implemented, there is the issue of training drivers to use them to their full potential in order to maximize the risk-reducing features of the system.

Advanced driver-assistance systems (ADAS) features evolve as technology and vehicle engineering advance. These safety systems are now the most desired features among drivers looking for a safer vehicle. The ADAS advancement is currently at Level 2 (Partial Driving Automation), where the vehicle can control both steering and accelerating/decelerating but isn’t fully self-driving because a human sits in the driver’s seat and can take control of the vehicle at any time.

Moving toward fully autonomous vehicles that can sense their surroundings and operate without the need for human intervention will require an increase in the electronic architecture of these vehicles. In the future, ADAS systems will offer greater accuracy, power efficiency, and performance by incorporating the latest embedded computer vision and deep learning techniques into automotive SOCs.

FAQ's

Advanced Driver-Assistance Systems (ADAS) refer to a set of technologies integrated into modern automobiles to enhance safety and improve driving experience. ADAS uses sensors, cameras, and algorithms to assist drivers in various aspects of driving, such as collision avoidance, lane-keeping, adaptive cruise control, and parking assistance.

ADAS helps prevent accidents and reduce the severity of collisions by providing real-time feedback and intervention to drivers. It can detect potential hazards, warn drivers about dangerous situations, and even take control of the vehicle in emergency situations to avoid accidents.

ADAS in automobiles typically include features like adaptive cruise control, lane departure warning, automatic emergency braking, blind-spot monitoring, rear cross-traffic alert, and parking assist. These components work together to improve driving safety and convenience.

While ADAS is designed to work effectively in various driving conditions, certain systems may have limitations. Factors like inclement weather, poor visibility, and road conditions may affect the performance of ADAS features. Drivers should remain attentive and ready to take control of the vehicle when necessary.

ADAS features are becoming increasingly common in new vehicles, especially in high-end models and those with advanced safety packages. However, not all vehicles come equipped with ADAS as standard. Some manufacturers offer ADAS as optional upgrades or in specific trim levels.

ADAS features can positively impact insurance rates as they reduce the likelihood of accidents and enhance vehicle safety. Some insurance companies offer discounts for vehicles equipped with certain ADAS technologies, as they are seen as a measure to mitigate risks.

Deepak Wadhwani has over 20 years experience in software/wireless technologies. He has worked with Fortune 500 companies including Intuit, ESRI, Qualcomm, Sprint, Verizon, Vodafone, Nortel, Microsoft and Oracle in over 60 countries. Deepak has worked on Internet marketing projects in San Diego, Los Angeles, Orange Country, Denver, Nashville, Kansas City, New York, San Francisco and Huntsville. Deepak has been a founder of technology Startups for one of the first Cityguides, yellow pages online and web based enterprise solutions. He is an internet marketing and technology expert & co-founder for a San Diego Internet marketing company.